Please support us by subscribing to our channel! Thanks a lot! 👏

Welcome

Another Sprint is gone, would you believe it? Let’s look at we have accomplished! This will be an exhaustive review of our last sprint. We have a LOT to cover, so let’s get going!

Sprint Recap

So for those that haven’t read all the posts (or seen all the videos) a quick recap either by visiting the previous posts:

or by binging YouTube video playlists:

alternatively, here is a short TL;DR:

I. Motivation

We are continuing with migrating our existing application from bare metal to cloud.

II. Deployment Environment

The deployment of choice after initial bumpy ride has been Kubernetes, namely the GKE (= Google Kubernetes Engine).

III. Sprint Goal

The main aim of the sprint was to finally get the API driven services (Auth, Billing and the Resource Server) deployed, so that we can test their interaction.

Just like the previous sprint, this one was planned during a sprint planning and had 8 working days this time. As we give our tasks t-shirt sizes (S, M, L), we use light version of planning simply by ordering the tasks according to their priority and then taking the amount that corresponds to the allocated time in the sprint.

Tasks

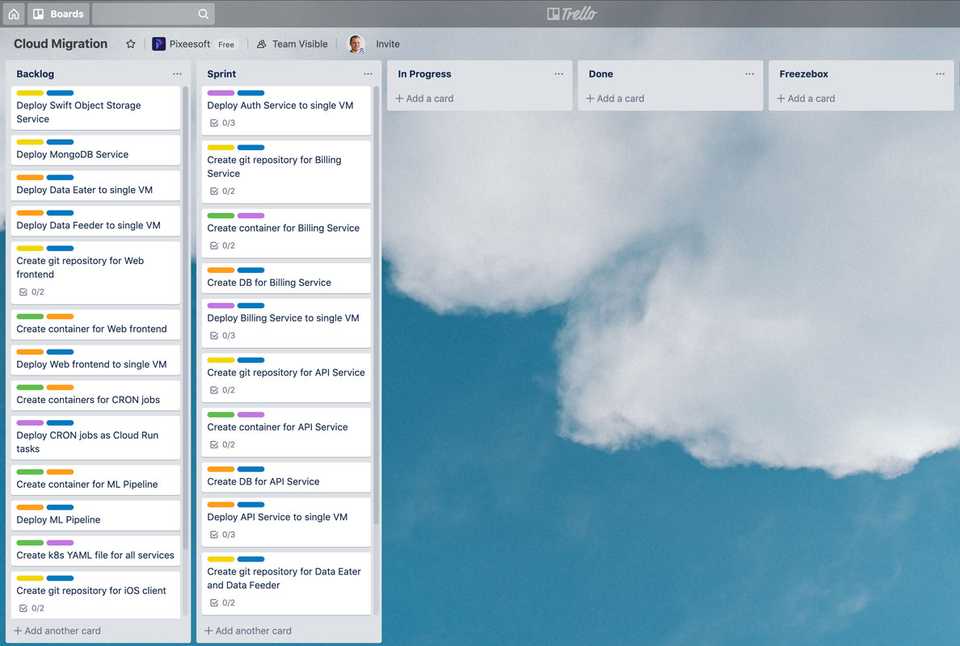

Let’s start with reminding ourselves what the board looked like at the end of the planning. Issues neatly organized in the Sprint lane, waiting to get finished.

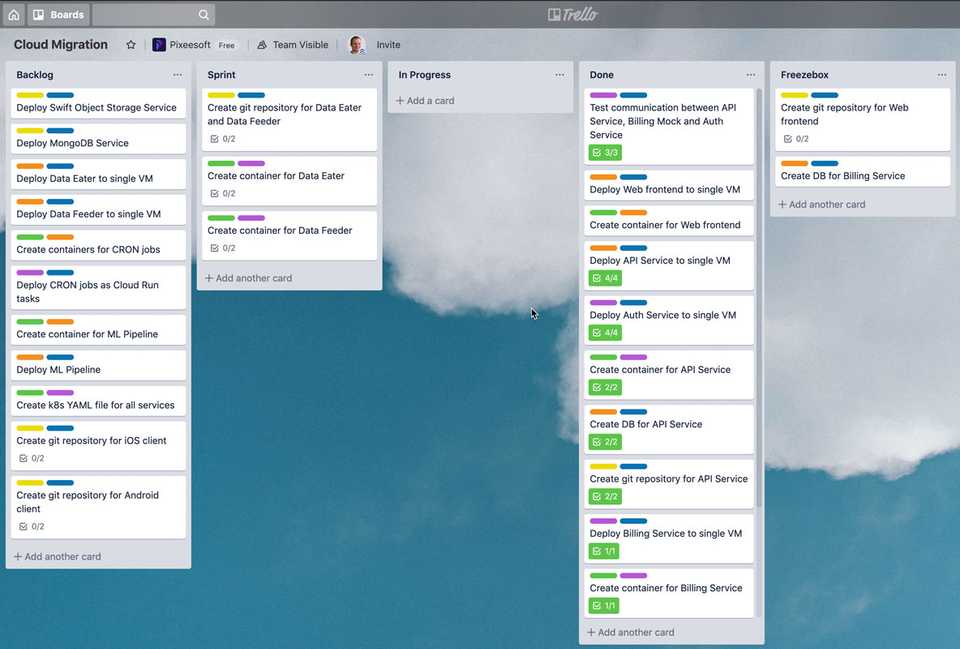

And here is the board after the sprint. Some tasks were left behind, two tasks are in the freezebox. So what happened? Poor planning, perhaps? Well, in order to draw such a conclusion, we have to go back to the previous sprint, where we underestimated the tasks by giving them 50% of the effort needed to complete them. Compared to that the planning was actually quite successful. Plus we got really lucky that the main web frontend is actually deployed the same way as the authentication frontend. That allowed us to move faster than anticipated and complete those tasks along with the API Server.

But now, let’s take a closer look at what caused some of the issues to be postponed.

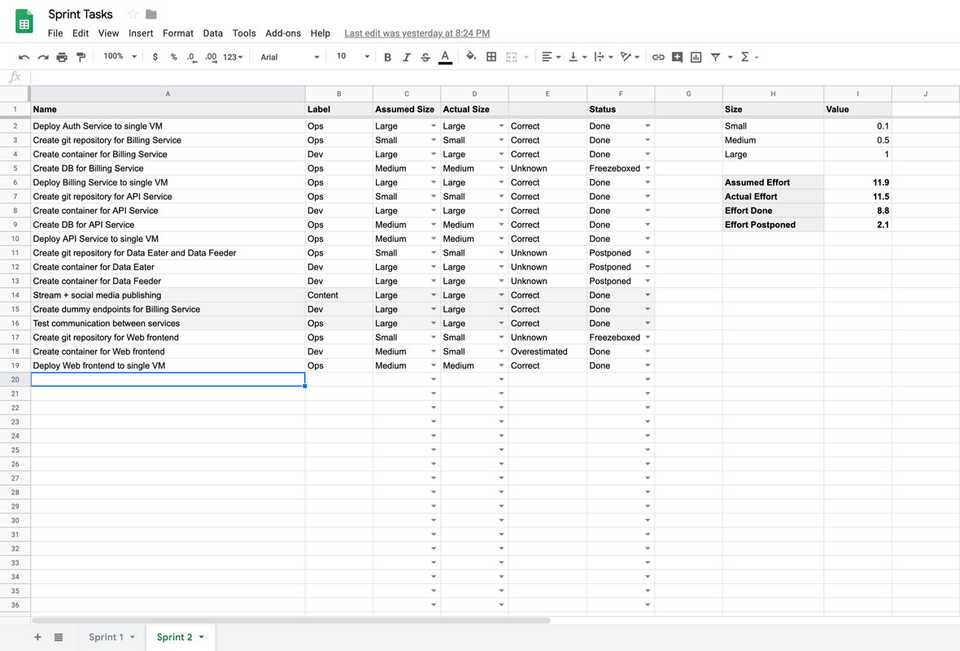

Here I have created a table outlining the tasks for the sprint. The tasks with grey background have been added during the sprint - they were tasks that I either forgot, or I didn’t know about during the planning phase.

Reason 1

Even though I slimmed down on the video production (I seriously don’t understand how can someone do a daily vlog while working a job), it still takes a solid day of work combined for all the efforts - the streaming, IGTV post-production, creating social media posts, writing up weekly changelogs etc.

Reason 2

Billing Service was a complete spaghetti disaster - completely unusable outside of the previous company and unsuitable for the future - we had to pivot by creating a dummy endpoint that behaves like the previous version to the rest of the system.

Reason 3

Integration testing - you don’t actually know in advance how much time you’ll need until you’re done. Because there could be a 100 plus 1 reasons why something doesn’t want to respond. That’s an issue I had to solve.

Other than those extra tasks, the majority of the sprint tasks had the right size and are marked as done, so I see that definitely as a success. There’s always room for improvement, though!

Improvements since last sprint

Last time during the review, I needed to address three issues:

- Optimistic estimates

- Missing subtasks

- Video production

So how did we do in these areas?

Optimistic estimates

I adjusted all the estimated of related issues from last sprint so that they reflected the gained knowledge. That resulted in tasks that were defined more accurately.

Missing subtasks

With missing subtasks, you don’t really know until you know. Which is a weird thing to say, I know. But I hoped I could account for some of the work by increased effort of some of the tasks, resulting in more accurate estimates, but I still had to put some in during the sprint. I hope that the further we go, the more knowledge I’ll have so that the tasks are complete and nothing new joins the sprint as we go.

Video production

And as for the video production - when I leave out Sprint Planning and Review, the production of the daily streams and everything around them amounts to a day of work in total. There’s daily content, so 8 streams in total, taking only one day of effort. That’s a huge improvement from the last sprint’s 3 standup videos that took a day and a half in total to make.

Done

So which of the tasks did I finish? Well everything with respect to the API driven services:

- Authentication Service is deployed both as API and as user facing interface.

- The main API Server is deployed both as API and as user facing interface.

- Billing Service is mocked, API only.

- The interaction between the services is working.

What about technical debt? Anything new? Well, yes:

- The billing service is a mock. It doesn’t perform anything, doesn’t bill anyone. It will have to be written from scratch, as was intended, but for now it is technical debt.

- The deployment is YAML based, but the underlying infrastructure isn’t. There are ways to go about this - by using Terraform to bootstrap the whole infrastructure.

- The web frontend lives in the same repo as the API server it calls. This is not a terrible problem, because they’re deployed in the LAMP environment. But for future frontend development using tools such as React, it would definitely be better to separate the two.

All of these go to the table of technical debt. None of the previous tasks have been solved yet, so we just have to make sure the table doesn’t get too big and eventually allocate some time during a sprint to address some of the technical debt.

The Good and The Bad

So what are the good outcomes of this sprint?

- I managed to do a minor change in the PHP codebase to implement health endpoints.

- I have a much better understanding of the nuances in kubectl commands.

- All the services seem to be running along with their interfaces!

Where do we have room for improvement?

- I accumulated more technical debt without addressing anything old.

- I started working on a lengthy task on a Friday resulting in a bad weekend.

- I got held up by elementary things without realizing it.

I’d like to double down on the good outcomes and hopefully get better at the weaker points, so that the resulting system is resilient and most importantly - liked both by customers and the engineers.

Conclusion

So there it is, our sprint review. We went through the tasks, improvements since last time and the good and the bad. But let’s hear from you now - Do you track your improvement over time from sprint to sprint? Do you measure your velocity and keep your engineers happy by giving them space during retrospectives? Let me know on any social platform - facebook, twitter or instagram!